An experiment in turning AI-generated SVGs into something animated, reusable, and actually useful for storytelling.

This post was written as part of my entry for the Gemini Live Agent Challenge. #GeminiLiveAgentChallenge

What originally inspired me was actually a very simple question.

Models were getting surprisingly good at two things at once: generating SVGs and understanding text. That made me wonder whether those two strengths could be combined into something much bigger. If a model can understand a story and also generate vector characters and scenes, could those SVGs become animated too? And if they could, could that lead to something beyond just making moving images and turn into a real cartoon-making system?

That question eventually turned into Cartoon 2D.

AI should direct the cartoon. The engine should make it playable.

The Real Problem

A lot of AI creative tools are excellent at giving you one impressive result.

But once you try to make something longer, the cracks start to show. Characters drift. Motion gets weird. Timing becomes inconsistent. Costs rise because too much has to be regenerated from scratch. And even when the output looks good in a still frame, it may stop feeling good the second it starts moving.

That was probably the most important realization in the project: making something that looks nice is not the same as making something that animates well.

And if the goal is long-form storytelling, that difference matters a lot.

Why Long-Form Cartoons Make Sense

I was not especially interested in building a one-shot clip generator. What felt more interesting was the possibility of building something that could support longer stories.

Long-form cartoons are actually a very good fit for this kind of system, because they naturally have recurring characters and lots of dialogue. That means a lot of expensive work only has to be done once.

If you can rig a character once, stabilize their design once, assign them a voice once, and build a reusable set of motion patterns once, the whole system becomes much cheaper and much more predictable. Instead of regenerating everything from zero in every new scene, you start reusing the same actor across the story.

That is a big part of why this project made sense to me. Reuse is not just a bonus. It is the whole economic advantage.

The Architecture Shift

One of the biggest lessons from earlier attempts (ADK + bidi-streaming) was that animation does not just need generation. It needs control.

You need a timeline. You need scrubbing. You need previews. You need to be able to fix one scene without destroying the next. You need to reuse a character without reinventing them every time they appear.

That led to the architectural decision that made the whole project start working:

AI should describe intent. Deterministic code should execute it.

Once I stopped treating the model like a system that should directly output final animation and started treating it more like a creative director, the project became much more coherent. The model could focus on structure, description, scene intent, motion intent, and assets. The runtime could focus on validation, compilation, playback, reuse, and export.

How Cartoon 2D Works

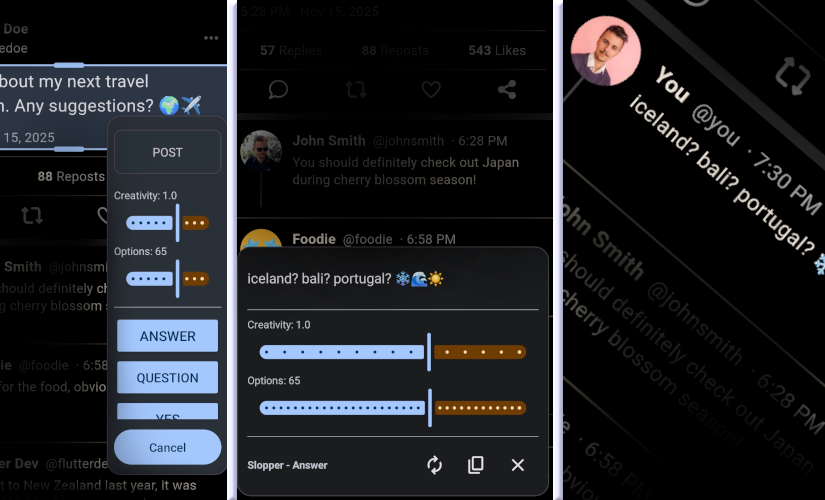

The workflow starts with a text prompt.

Gemini generates structured scene beats, comic-style scene images, dialogue cues, and motion intent. From there, the system turns those outputs into something the runtime can actually use.

Characters are turned into SVG rigs with pivots, bones, views, and limits. Motion intent gets compiled into playable clips using a deterministic TypeScript runtime built around a canonical IK graph. Dialogue goes through Google Cloud Text-to-Speech, which then feeds timing and lip-sync data back into the animation layer. Scenes land on a timeline where they can be previewed, adjusted, and exported.

The important thing is that the model is not being asked to directly produce a finished long cartoon frame by frame. It is being asked to produce creative direction and structured intent. The engine then has to make that intent playable.

That was the only way I found to make the system feel less like a prompt demo and more like actual software.

The Newest Models Really Helped

One thing that became very obvious while building this is that the newest models genuinely matter.

For rigging especially, the latest Gemini Pro models were a real unlock. They did a noticeably better job at returning clean, good-looking SVG assets from image references. That mattered more than I expected, because weak rig output makes every downstream problem harder. If the character starts from a bad place, animation gets harder, cleanup gets harder, and consistency gets harder.

So for this kind of system, using the strongest models really did improve the result in a meaningful way.

The Hardest Part Is Still Animation

The biggest struggle in the whole project was animation quality.

And honestly, it still is.

A character can look great in a generated image and still move in a way that feels awkward, floaty, stiff, or just plain wrong when it starts playing back. That gap between “good image” and “good animation” is huge.

So I do not want to present this as some magical workflow where you paste in a full cartoon script and instantly get a polished finished episode. It is not that. Not yet.

Right now, the system works best step by step:

- scene by scene

- actor by actor

- clip by clip

That is the honest version.

And I think that is fine, because animation quality is hard and deserves real attention. If more focused research had gone into the animation layer itself, I think the result could have been much stronger. In many ways, that still feels like the biggest area for improvement.

What Was Difficult Besides Animation

Consistency was another major challenge.

At first, characters came back looking different in every scene. The fix was an identity-lock system: save reference portraits for actors, then feed those back into later generations so Gemini has a stable visual anchor.

Rigging was also messy. Sometimes generated characters came back structurally weak, missing pieces, over-segmented, or arranged in ways that were hard to animate. To keep the pipeline usable, I added deterministic cleanup and repair passes that normalize the rig output before playback.

The editor itself was also a big part of the work. The timeline UI, scrubbing, audio placement, scene previews, and export logic were not “just AI problems.” They were product and engineering problems, and they took a lot of iteration.

Lip sync added another layer. Dialogue audio is generated with Google Cloud Text-to-Speech, and timing data is converted into visemes that drive SVG mouth shapes or jaw motion. That meant the audio system, the rigging system, and the playback system all had to agree on the same structure.

How It Was Built

This project was built in a very iterative way, and AI-assisted development was a real part of that process.

Antigravity, Codex, and Claude all helped at different points with architecture exploration, implementation, refactoring, debugging, and moving through ideas more quickly. That made it easier to test different directions and keep momentum while building.

But those tools did not remove the hard part. They mostly helped me get to the hard part faster.

The real work was still deciding where AI should be trusted, where deterministic code was necessary, and how much structure the system needed in order to stay usable.

One Direction That Could Improve It a Lot

One idea I keep coming back to is that the system could become much stronger if more of the animation vocabulary was predefined up front.

For example, instead of asking the model to invent every object class and every kind of motion from scratch, a better system might predefine more of that world in advance:

- known object types

- known rig families

- known animation categories

- known motion libraries

That would make the system more deterministic, and I think it would also provide the quality bump the project still needs.

In other words, one of the best next steps may not be “let the model invent everything,” but “let the model choose intelligently from a stronger predefined animation language.”

That feels like a very practical path forward.

The Google Stack

The project leans heavily on Google’s ecosystem.

Gemini is the creative layer. Gemini 3.1 Flash Image Preview is used for storyboard and scene image generation. Gemini 3.1 Pro Preview is used for heavier reasoning tasks like rig drafting, motion planning, and structured asset generation.

Google Cloud Text-to-Speech is used for dialogue generation and timing data that drives lip sync.

The application itself is deployed on Google Cloud Run, and server-side FFmpeg is used to package rendered frames and audio into MP4 output.

The API integration side was actually fairly smooth. The harder engineering work was in the translation layer: taking creative, probabilistic output and turning it into something stable enough to animate and edit.

What I Learned

The biggest lesson from this project is that architecture matters more than novelty.

The key question was never just “which model should I call?” The real question was: what should the model be responsible for, and what should code be responsible for?

Once that split became clear, the project improved in every direction. It became more controllable, more reusable, more predictable, and much easier to think about.

I also came away believing even more strongly that guardrails are not the enemy of creativity. In production systems, they are often what makes creativity useful.

And maybe most importantly, I learned that in animation it is better to block bad output than to pretend it is good.

Where I Think This Is Going

Even with all the rough edges, I think the prototype turned out well.

It no longer feels like a toy prompt demo. It feels like the beginning of a real direction: AI-assisted cartoon production where the model handles creative intent and the runtime handles validation, reuse, playback, and editing.

That is the part I find exciting.

Not the idea of replacing animation with prompting, but the idea of building animation software that becomes dramatically faster and more useful because AI is embedded in the right places.

That is what Cartoon 2D is trying to do.

AI should direct the cartoon. The engine should make it playable.

One thing that did not make it into the final version was the SFX system. It actually worked pretty well in free Google Colab and even locally on my MacBook.

The setup looked like this:

!pip install fastapi uvicorn pydantic diffusers transformers accelerate soundfile pyngrok nest-asyncio torchsdeThe real blocker was deployment cost. To run it properly, I really needed a dedicated T4-class GPU, and that was more than I could justify for this prototype at the moment. So for now, sound effects are not generated in the final deployed version.